[ad_1]

The “as code” strategy has proliferated to virtually each facet of the trendy tech firm. Why ought to information analytics be any completely different? A contemporary analytics mission might be no much less complicated than, let’s say, an Infrastructure as Code setup, and it might probably additionally profit from versioning, automation, and collaborative coding instruments.

On this article, you’ll learn to outline an analytics resolution as code, arrange CI/CD pipelines for such an answer, and combine it along with your infrastructure.

In case you’d relatively examine the advantages of analytics as code in comparison with conventional options, right here is an article discussing simply that!

GoodData’s Tackle Analytics as Code

Analytics as code will not be new to GoodData. We’ve had our Declarative API for some time now. Its essential function being to model analytics tasks, copy them between completely different cases of GoodData and permit the manipulation of the metadata in a pipeline.

Python SDK is constructed on high of the Declarative API and took it to a complete different degree. A mission might be outlined fully programmatically, or loaded from the Declarative API and manipulated with Python scripts.

GoodData for VS Code is our newest addition to the toolset and the subject of this text. Our objective with this instrument is to introduce analytics engineers to software program improvement finest practices like coding inside an IDE, contributing to Github, opening Pull Requests, and using CI/CD pipelines for deployment.

GoodData for VS Code

GoodData for VS Code consists of two complementary instruments: a VS Code Extension and a CLI utility. It additionally defines the way you describe analytics objects in code — a language syntax. Let’s undergo this stuff subsequent.

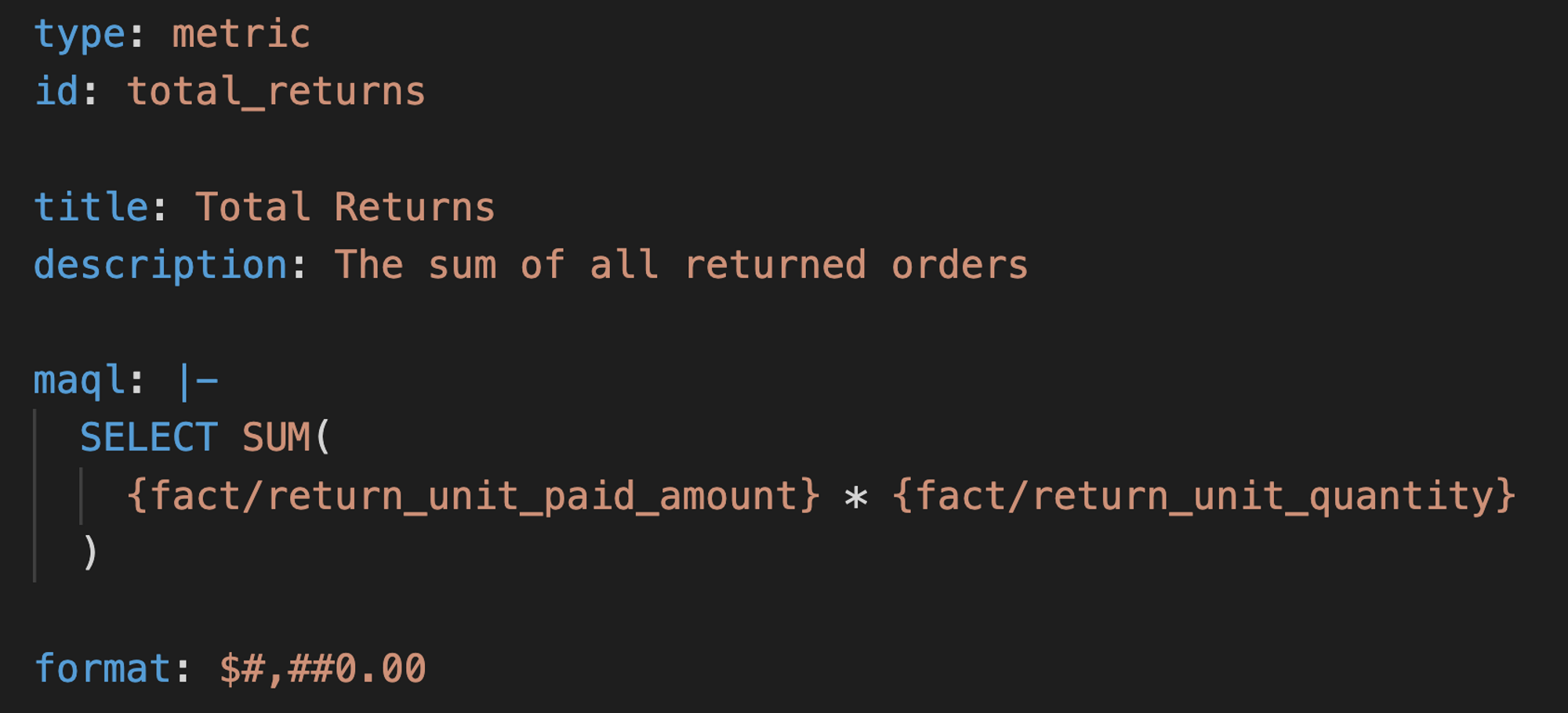

Language Syntax

The GoodData for VS Code language syntax is predicated on YAML, which we selected for its brevity and ease.

Our Python SDK additionally makes use of YAML to retailer declarative definitions in recordsdata for versioning. Nonetheless, these are two completely different codecs. GoodData for VS Code’s format focuses on being human-friendly and transient, whereas the format of Python SDK strictly follows the REST API schema from our server. These instruments have completely different use instances in thoughts and, as they evolve additional, we are going to resolve if we need to finally merge them or allow them to occupy their very own niches.

At the moment, GoodData for VS Code allows you to outline datasets and metrics. Collectively, these objects describe the semantic layer; the muse of an analytics mission.

We’re planning to assist visualizations and dashboards with GoodData for VS Code within the following releases, thus permitting you to outline an entire analytics mission as code.

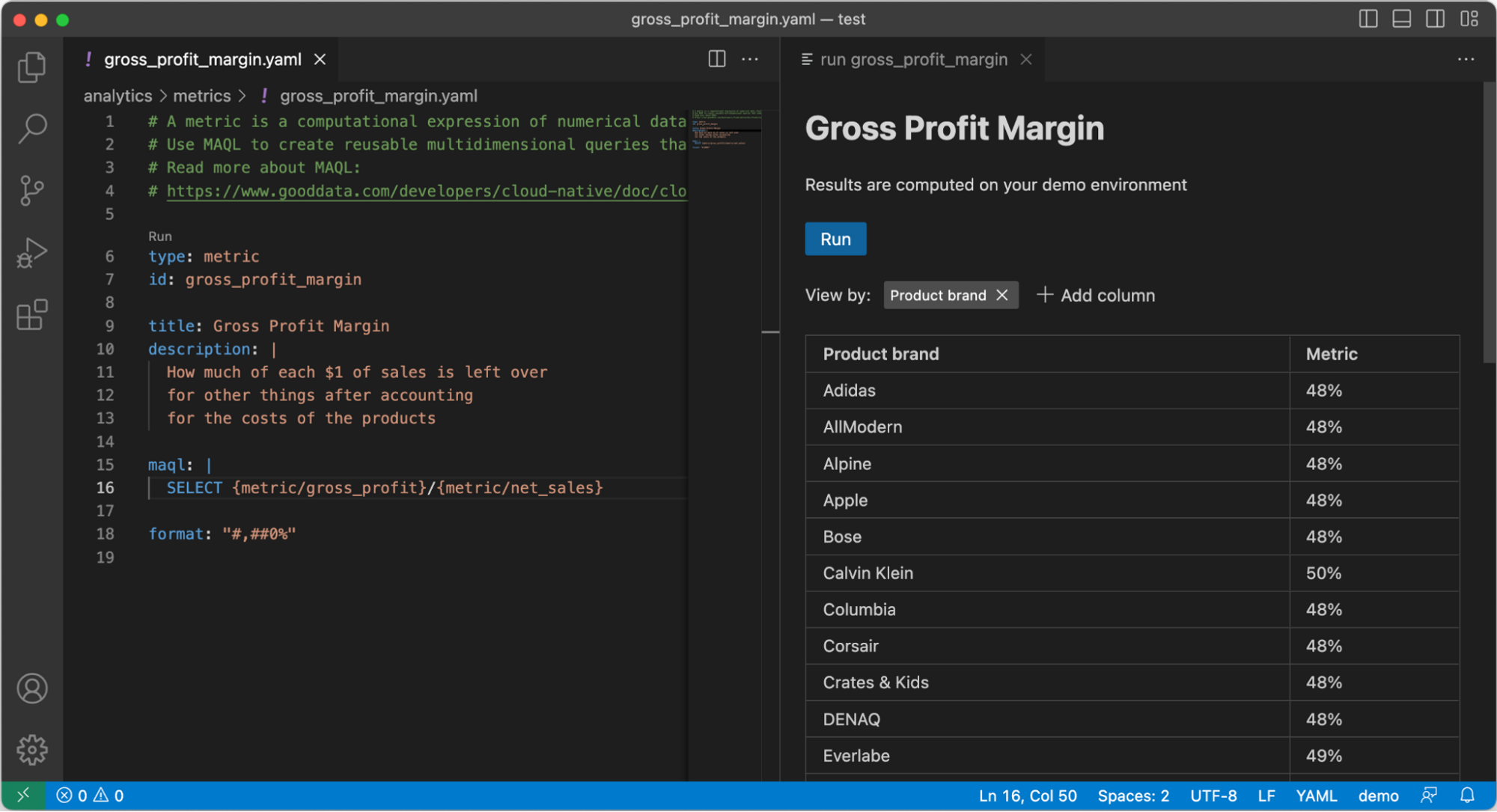

VS Code Extension

VS Code has respectable assist for YAML file enhancing out of the field, particularly should you connect the precise JSON schema. Nonetheless, it might nonetheless be missing the context wanted to run semantic validation or counsel the precise autocomplete choice. It is for that motive we created our extension for VS Code. Listed below are some options that the GoodData extension packs:

-

Not like the built-in syntax spotlight, our extension additionally highlights ids and references between objects, making it simpler to navigate the doc.

-

We’ve put loads of effort into analytics mission validation. You get an ordinary, to be anticipated, schema validation and semantic validation for each file. However we went even additional and added contextual validation. Your mission recordsdata usually are not solely cross-validated inside the mission but in addition validated towards your database to make sure you’re referencing solely present tables and columns. This additionally opens some prospects for future integration with different instruments in your stack, like dbt or Meltano.

-

Autocomplete does what you count on — it suggests legitimate choices for a given property as you kind.

Metric preview -

The preview characteristic is a big productiveness booster and lets you preview your datasets and metrics proper from VS Code with out the necessity to change to the browser to verify the outcomes.

GoodData’s extension for VS Code is accessible now on {the marketplace}. You can even set up it proper from the extensions tab in your VS Code — simply seek for “GoodData”.

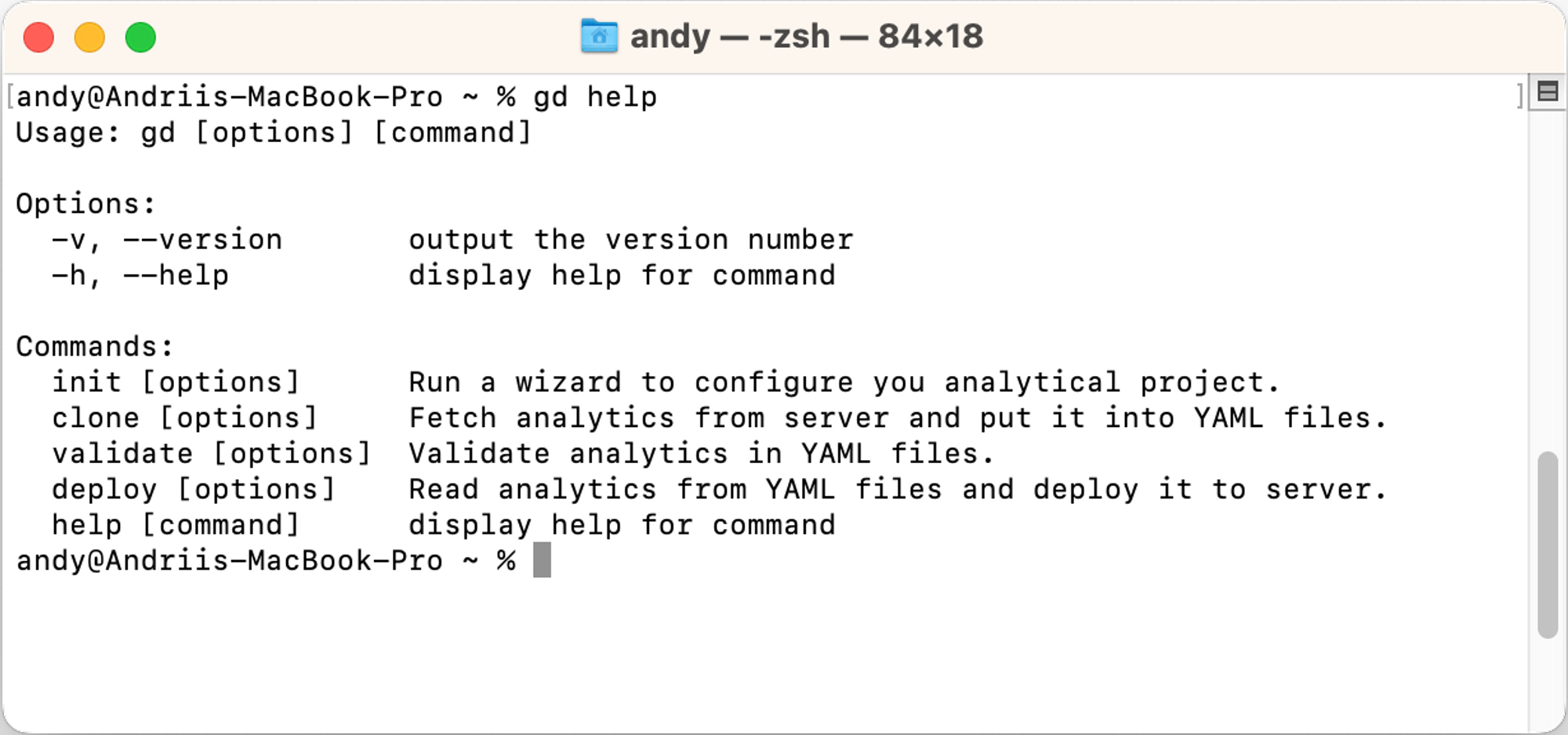

CLI Utility

GoodData CLI is a command line app that’s meant for use as a companion to the VS Code extension or individually in CI/CD pipelines. It’s written in JavaScript, thus requires NodeJS, and might be put in instantly from NPM (npm i -g @gooddata/code-cli).

GoodData CLI gives 4 instructions. Some are extra fascinating when utilized in mixture with the VS Code extension (init and clone), whereas others have been constructed with CI/CD pipelines in thoughts (validate and deploy).

The Workflow

Irrespective of how good your instruments are and the way environment friendly you might be in creating code, you will not get far and not using a sturdy workflow. A workflow to stop human errors, but be versatile sufficient to not get in the best way once you’re on a job. Let’s see what the setup and CI/CD pipelines might appear to be for an analytics mission.

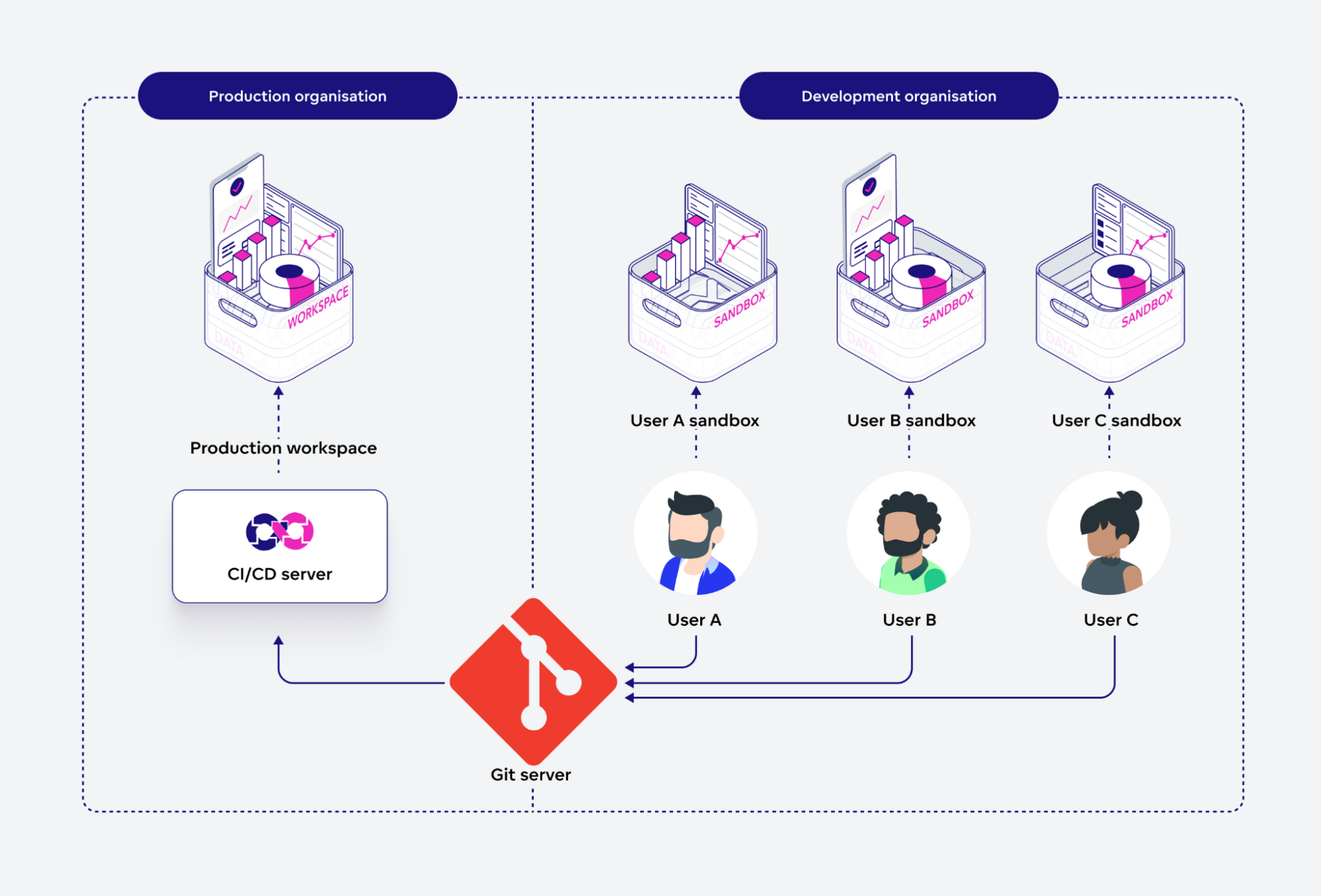

The Setup

To begin with, each analytics engineer must have the “handle” permission on the group degree on GoodData Cloud. Ideally, you’ll need to have two organizations: one for improvement, the place all analytics engineers get full entry, and one other for manufacturing, the place solely CI/CD pipelines can push modifications to.

Subsequent, every analytics engineer ought to ideally have their very own sandbox workspace inside the improvement group. That’s as a result of we have to deploy the modifications in an effort to run previews for datasets and metrics. If a number of individuals would share the identical dev workspace, there can be a threat of overriding one another’s work and ending up with unreliable previews.

With such a setup, each analytics engineer in your workforce will be capable of work independently in their very own sandbox, with none threat of inadvertently affecting manufacturing. All modifications to the manufacturing setting are achieved by CI/CD pipelines after correct gating: code evaluation and automatic exams.

CI/CD Pipelines

If all you want is to propagate the work that analytics engineers are doing to the manufacturing server, the CI/CD setup might be very simple. Right here is an instance for GitHub Actions.

First, you’ll must gate any new code that’s being merged to the principle department — GoodData CLI can validate the mission and guarantee there are not any apparent errors. The next pipelines will execute validation on each Pull Request to the essential department. In case you additionally forbid direct pushes to the department and make the checks obligatory for Pull Requests in your repo settings, you’ll be able to make sure that no invalid code will ever be merged there.

title: GoodData Analytics Gating

on:

pull_request:

branches:

- 'essential'

jobs:

gate:

runs-on: ubuntu-latest

env:

# Outline your token in GitHub secrets and techniques

GOODDATA_API_TOKEN: ${{secrets and techniques.GOODDATA_API_TOKEN}}

steps:

- title: Checkout code

makes use of: actions/checkout@v3

- title: Arrange NodeJS

makes use of: actions/setup-node@v3

- title: Set up GoodData CLI

run: npm i -g @gooddata/code-cli

- title: Validate agains staging setting

run: gd validate --profile staging

Subsequent, you’ll need to deploy the brand new model of analytics after the merge. If your organization is embracing Steady Supply, this might be your manufacturing deployment. If not, you’ll be able to set it for a staging setting and produce other pipelines for manufacturing, maybe triggered manually.

title: GoodData Analytics Deployment

on:

push:

branches:

- 'essential'

jobs:

gate:

runs-on: ubuntu-latest

env:

# Outline your token in GitHub secrets and techniques

GOODDATA_API_TOKEN: ${{secrets and techniques.GOODDATA_API_TOKEN}}

steps:

- title: Checkout code

makes use of: actions/checkout@v3

- title: Arrange NodeJS

makes use of: actions/setup-node@v3

- title: Set up GoodData CLI

run: npm i -g @gooddata/code-cli

- title: Validate agains manufacturing setting

run: gd validate --profile manufacturing

- title: Deploy to manufacturing

run: gd deploy --profile manufacturing --no-validate

Be aware, that within the instance above we’ve separated the validation and deployment steps. That’s achieved purely for our comfort when studying the pipeline outcomes. Technically, each deploy command first runs validation, until you move the --no-validate choice.

There’s a catch to this setup, although. GoodData for VS Code solely covers the semantic layer (and shortly will cowl the analytics layer) of your mission. However there may be a lot extra to a typical mission: information supply definitions, information filters, workspace hierarchies, consumer administration, and permissions, and so on. Moreover, you would possibly need to have a number of workspaces with completely different semantic layers in a single group. How do you orchestrate an entire deployment? Nicely, that’s the place the older brothers of GoodData for VS Code are available: Declarative API and Python SDK. I’ve made a demo mission on what an entire setup would possibly appear to be — with analytics outlined by GoodData for VS Code and the remaining is finished with a Python script. Be at liberty to fork it on GitHub.

What’s Subsequent?

GoodData for VS Code is at the moment obtainable as a public beta, and we’re dedicated to creating it additional right into a steady launch. Listed below are just a few subjects we’re wanting into:

- Including assist for visualization and dashboard definitions in code.

- Integration with different “as code” instruments, each up the information pipeline (e.g. ELT instruments like dbt or Meltano) and down the pipeline (like our personal React SDK).

- Check automation for information analytics.

What characteristic would you wish to see carried out subsequent? If you wish to be a part of the story, attain out to us on our neighborhood Slack channel with suggestions and options.

Need to attempt GoodData for VS Code your self? Right here is an efficient place to begin To make use of it, you’ll want a GoodData account. One of the simplest ways to acquire it’s to register for a free trial.

[ad_2]